In this post, We have provided answers of NPTEL Deep Learning – IIT Ropar Assignment 1. We provided answers here only for reference. Plz, do your assignment at your own knowledge.

NPTEL Deep Learning – IIT Ropar Week 1 Assignment Answer 2023

1. The table below shows the temperature and humidity data for two cities. Is the data linearly separable?

- Yes

- No

- Cannot be determined from the given information

Answer :- Yes

2. What is the perceptron algorithm used for?

- Clustering data points

- Finding the shortest path in a graph

- Classifying data

- Solving optimization problems

Answer :- Classifying data

3. What is the most common activation function used in perceptrons?

- Sigmoid

- ReLU

- Tanh

- Step

Answer :- Click Here

4. Which of the following Boolean functions cannot be implemented by a perceptron?

- AND

- OR

- XOR

- NOT

Answer :- Click Here

5. We are given 4 points in R2 say, x1=(0,1),x2=(−1,−1),x3=(2,3),x4=(4,−5).Labels of x1,x2,x3,x4 are given to be −1,1,−1,1 We initiate the perceptron algorithm with an initial weight w0=(0,0) on this data. What will be the value of w0 after the algorithm converges? (Take points in sequential order from x1 to x)( update happens when the value of weight changes)

- (0,0)

- (−2,−2)

- (−2,−3)

- (1,1)

Answer :- Click Here

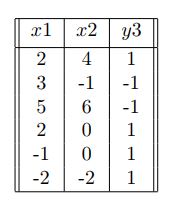

6. We are given the following data:

Can you classify every label correctly by training a perceptron algorithm? (assume bias to be 0 while training)

- Yes

- No

Answer :- Click Here

7. Suppose we have a boolean function that takes 5 inputs x1,x2,x3,x4,x5? We have an MP neuron with parameter θ=1. For how many inputs will this MP neuron give output y=1?

- 21

- 31

- 30

- 32

Answer :- Click Here

8. Which of the following best represents the meaning of term “Artificial Intelligence”?

- The ability of a machine to perform tasks that normally require human intelligence

- The ability of a machine to perform simple, repetitive tasks

- The ability of a machine to follow a set of pre-defined rules

- The ability of a machine to communicate with other machines

Answer :- Click Here

9. Which of the following statements is true about error surfaces in deep learning?

- They are always convex functions.

- They can have multiple local minima.

- They are never continuous.

- They are always linear functions.

Answer :-

10. What is the output of the following MP neuron for the AND Boolean function?

y={1,0,if x1+x2+x3≥1 0, therwise

- y=1 for (x1,x2,x3)=(0,1,1)

- y=0 for (x1,x2,x3)=(0,0,1)

- y=1 for (x1,x2,x3)=(1,1,1)

- y=0 for (x1,x2,x3)=(1,0,0)

Answer :- Click Here

NPTEL Deep Learning – IIT Ropar Assignment 1 Answers 2022 [July-Dec]

1. Pick out the appropriate shape of decision boundary if the number of inputs is three.

a. Point

b. Line

c. Plane

d. Hyperplane

Answer:- c

2. Pick out the one in biological neuron that is responsible for receiving signal from other neurons.

a. Dendrite

b. Synapse

c. Soma

d. Axon

Answer:- a

Answers will be Uploaded Shortly and it will be Notified on Telegram, So JOIN NOW

3. Which of the following is considered as a drawback of Deep Learning?

a. Numerical stability

b. Overfitting never occurs

c. Sharp minima

d. Overfitting always occurs

Answer:- c

4. Neurons play a vital role in how humans respond to the outside world. When does this occur?

a. Any one neuron gets activated

b.All the neurons of massively parallel interconnected network of neurons are activated.

c. Specific set of these neurons fire and relay the information to other neurons

d. At least 10% of the total number of neurons in the brain

Answer:- c

5. Consider a Mc Culloch Pitts Neuron for which the inputs are x1,x2 and x3. Also, the aggregate function g(x) is an OR function. What is the thresholding parameter for the same?

a. 0

b. 1

c. 2

d. 3

Answer:- b

6. Which of the following statements are True?

Statement I. Mc. Culloch Pitts neuron can be used to represent any boolean function

Statement II. If any of the inputs in a Mc. Culloch Pitts Neuron is inhibitory, then output will be zero

a. Only I

b. Only II

c. Both

d. None

Answer:- b

7. Pick out the boolean function that is not linearly separable.

a. AND

b. OR

c. NOR

d. XOR

Answer:- d

8. In a perceptron learning algorithm, what is the initial value of the weights before the algorithm starts learning?

a. All weights set to zero

b. All weights set to one

c. All weights assigned random values

d. All weights assigned values specific to the application in hand

Answer:- c

👇For Week 02 Assignment Answers👇

9. What is the condition for convergence of a perceptron learning algorithm?

a. Always converges

b. Data is linearly separable

c. Data is linearly non-separable

d. May or may not converge depending on the data

Answer:- b

10. Select all the statements that hold TRUE for a Single Perceptron.

a. Inputs are weighted

b. Threshold is hand coded

c. Only Real inputs are allowed

d. Both Real and boolean inputs are allowed

e. Can solve only linearly separable data

Answer:- a, d, e

For More NPTEL Answers:- CLICK HERE

Join Our Telegram:- CLICK HERE

About Deep Learning IIT – Ropar

Deep Learning has received a lot of attention over the past few years and has been employed successfully by companies like Google, Microsoft, IBM, Facebook, Twitter etc. to solve a wide range of problems in Computer Vision and Natural Language Processing. In this course we will learn about the building blocks used in these Deep Learning based solutions. Specifically, we will learn about feedforward neural networks, convolutional neural networks, recurrent neural networks and attention mechanisms. We will also look at various optimization algorithms such as Gradient Descent, Nesterov Accelerated Gradient Descent, Adam, AdaGrad and RMSProp which are used for training such deep neural networks. At the end of this course students would have knowledge of deep architectures used for solving various Vision and NLP tasks

COURSE LAYOUT

- Week 1 : (Partial) History of Deep Learning, Deep Learning Success Stories, McCulloch Pitts Neuron, Thresholding Logic, Perceptrons, Perceptron Learning Algorithm

- Week 2 : Multilayer Perceptrons (MLPs), Representation Power of MLPs, Sigmoid Neurons, Gradient Descent, Feedforward Neural Networks, Representation Power of Feedforward Neural Networks

- Week 3 : FeedForward Neural Networks, Backpropagation

- Week 4 : Gradient Descent (GD), Momentum Based GD, Nesterov Accelerated GD, Stochastic GD, AdaGrad, RMSProp, Adam, Eigenvalues and eigenvectors, Eigenvalue Decomposition, Basis

- Week 5 : Principal Component Analysis and its interpretations, Singular Value Decomposition

- Week 6 : Autoencoders and relation to PCA, Regularization in autoencoders, Denoising autoencoders, Sparse autoencoders, Contractive autoencoders

- Week 7 : Regularization: Bias Variance Tradeoff, L2 regularization, Early stopping, Dataset augmentation, Parameter sharing and tying, Injecting noise at input, Ensemble methods, Dropout

- Week 8 : Greedy Layerwise Pre-training, Better activation functions, Better weight initialization methods, Batch Normalization

- Week 9 : Learning Vectorial Representations Of Words

- Week 10: Convolutional Neural Networks, LeNet, AlexNet, ZF-Net, VGGNet, GoogLeNet, ResNet, Visualizing Convolutional Neural Networks, Guided Backpropagation, Deep Dream, Deep Art, Fooling Convolutional Neural Networks

- Week 11: Recurrent Neural Networks, Backpropagation through time (BPTT), Vanishing and Exploding Gradients, Truncated BPTT, GRU, LSTMs

- Week 12: Encoder Decoder Models, Attention Mechanism, Attention over images

CRITERIA TO GET A CERTIFICATE

Average assignment score = 25% of average of best 8 assignments out of the total 12 assignments given in the course.

Exam score = 75% of the proctored certification exam score out of 100

Final score = Average assignment score + Exam score

YOU WILL BE ELIGIBLE FOR A CERTIFICATE ONLY IF AVERAGE ASSIGNMENT SCORE >=10/25 AND EXAM SCORE >= 30/75. If one of the 2 criteria is not met, you will not get the certificate even if the Final score >= 40/100.